Abstract

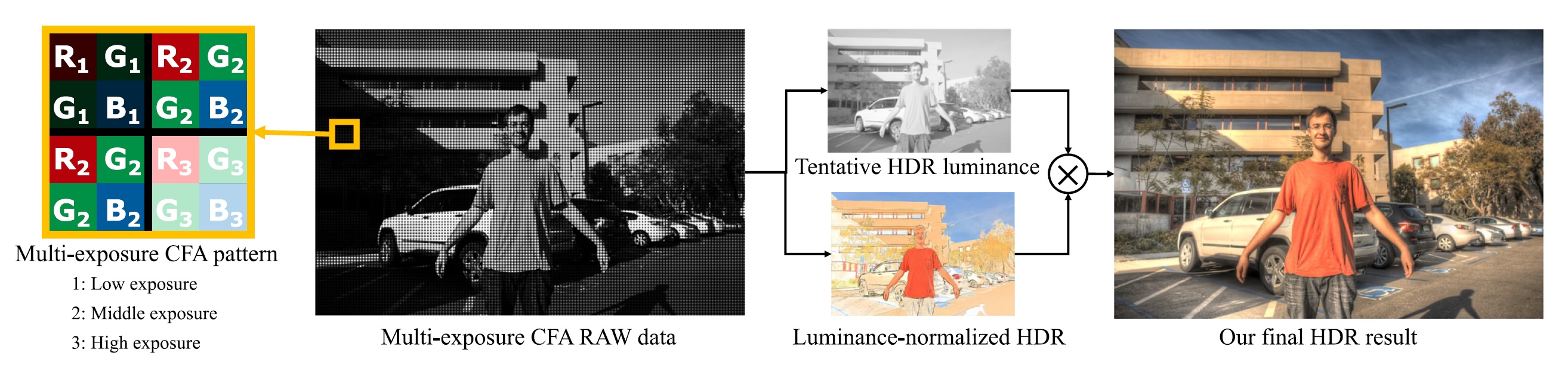

In this paper, we propose a deep snapshot high dynamic range (HDR) imaging framework that can effectively reconstruct an HDR image from the RAW data captured using a multi-exposure color filter array (ME-CFA), which consists of a mosaic pattern of RGB filters with different exposure levels. To effectively learn the HDR image reconstruction network,we introduce the idea of luminance normalization that simultaneously enables effective loss computation and input data normalization by considering relative local contrasts in the “normalized-by-luminance” HDR domain. This idea enables the network to equally handle the errors in both bright and dark areas regardless of absolute luminance levels, which significantly improves the visual image quality. Experimental results using public HDR image datasets demonstrate that our framework outperforms other snapshot methods and produces high-quality HDR images with fewer visual artifacts, resulting in more than 4dB color peak signal-to-noise ratio improvement in the linear HDR domain.

Publications

- Deep Snapshot HDR Imaging Using Multi-Exposure Color Filter Array [Official Access] [PDF]

Yutaro Okamoto, Masayuki Tanaka, Yusuke Monno, and Masatoshi Okutomi

The Visual Computer, 2023 (Early Access). - Deep Snapshot HDR Imaging Using Multi-Exposure Color Filter Array [Project]

Takeru Suda, Masayuki Tanaka, Yusuke Monno, and Masatoshi Okutomi

Asian Conference on Computer Vision (ACCV), 2020.