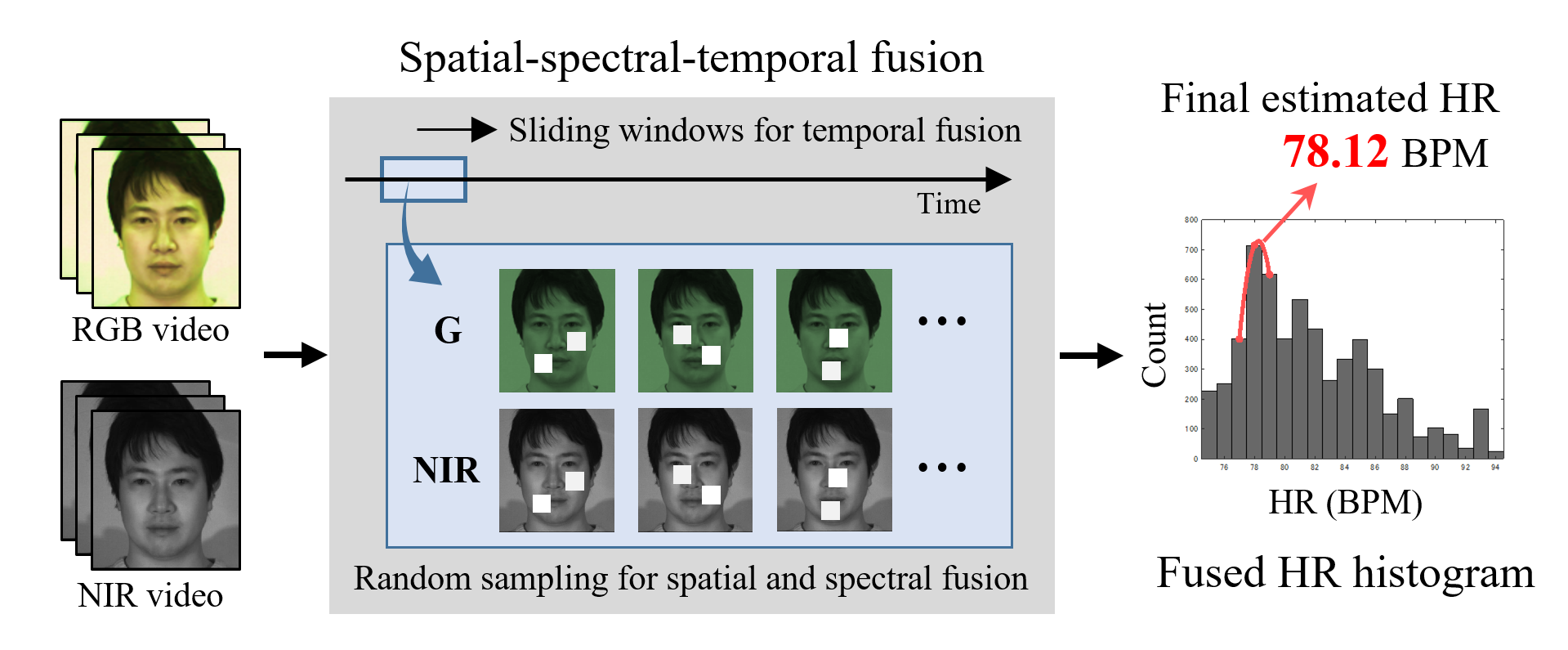

Spatial-Spectral-Temporal Fusion

for Remote Heart Rate Estimation

Shiika Kado, Yusuke Monno, Kazunori Yoshizaki, Masayuki Tanaka, Masatoshi Okutomi

IEEE Sensors Journal, vol. XX, no. XX, pp. XXXX-XXXX, 20XX (To appear)

Proposed Algorithm Overview

Abstract

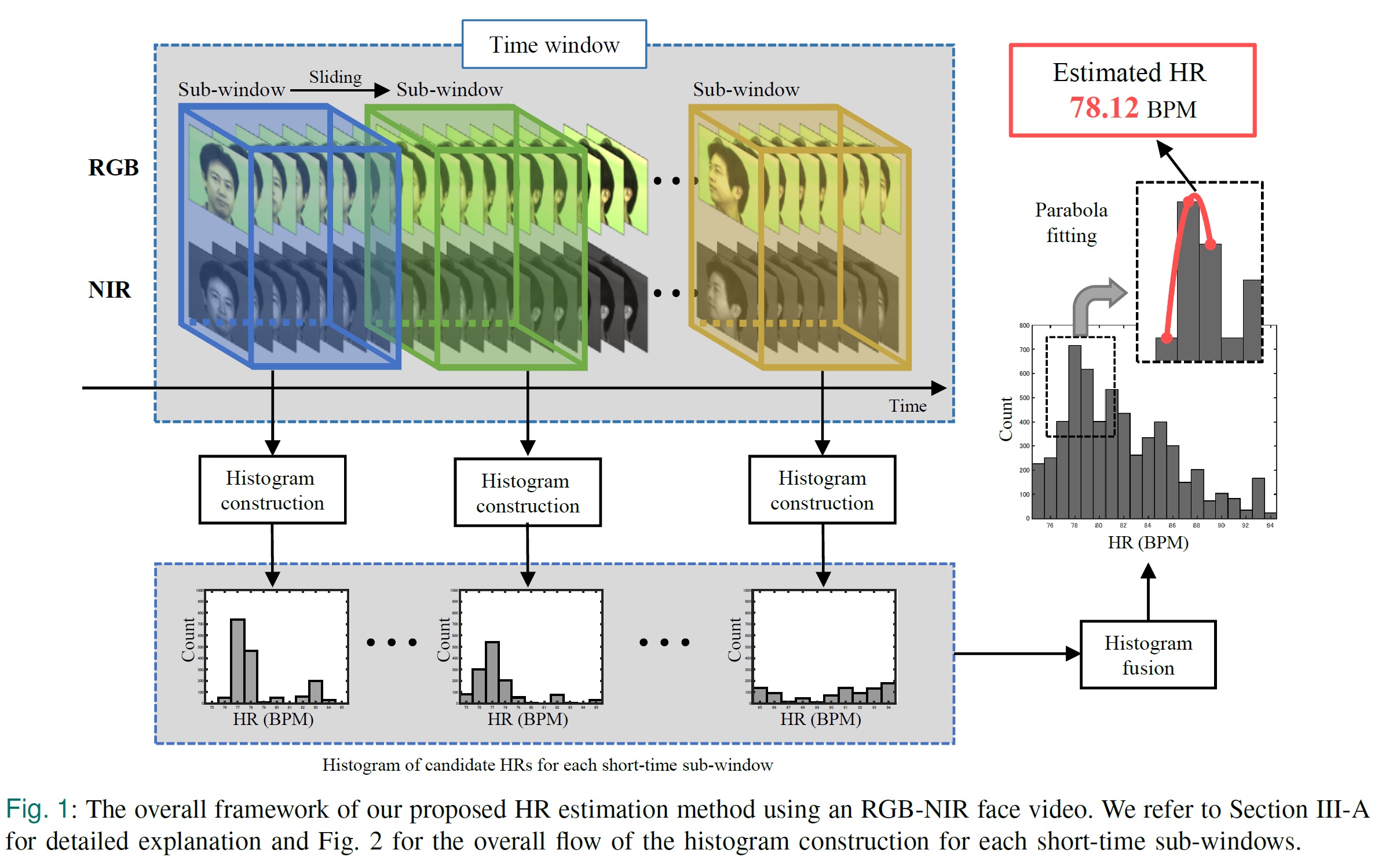

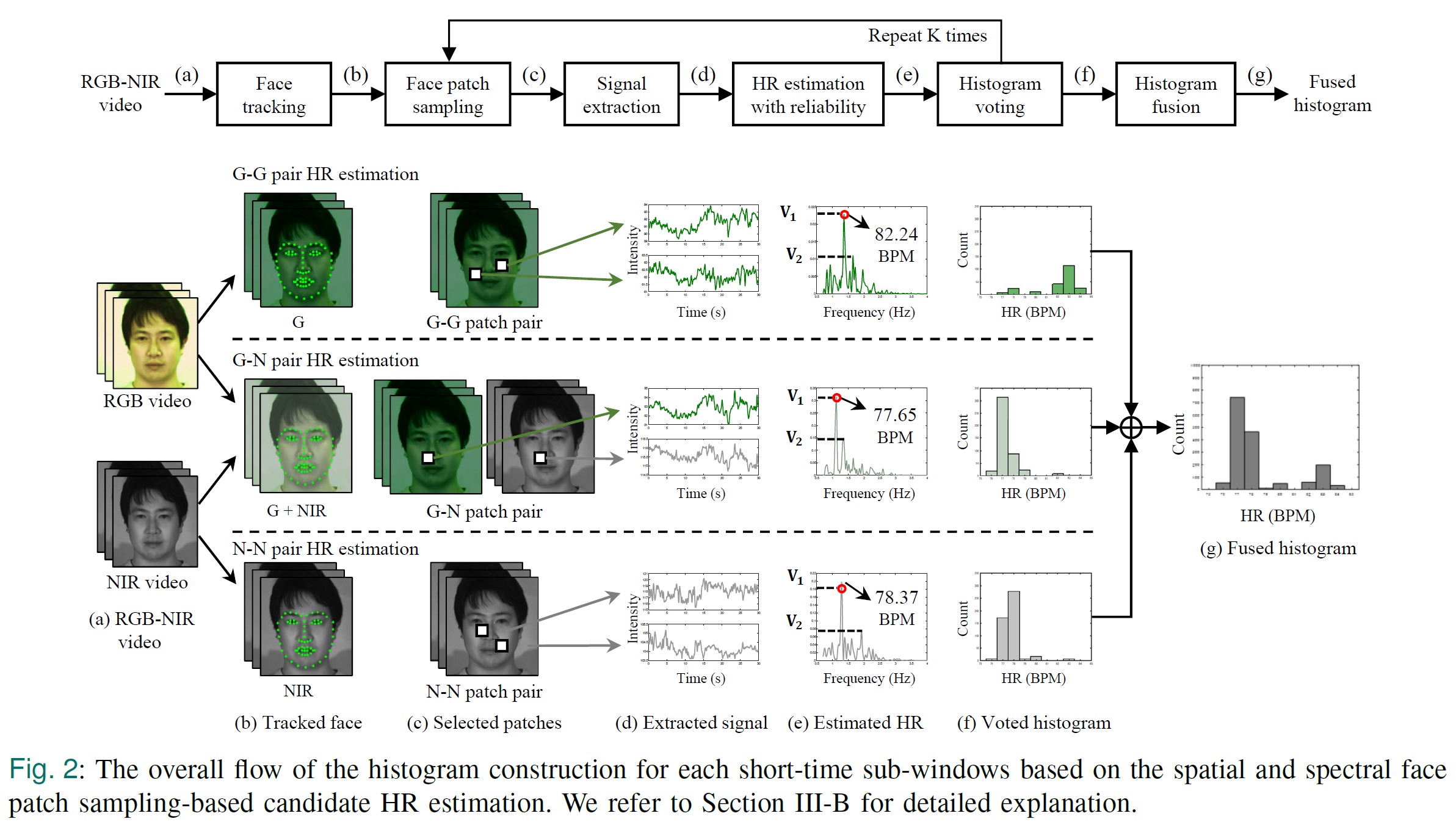

In this paper, we propose a novel heart rate (HR) estimation method using simultaneously recorded RGB and near-infrared (NIR) face videos to improve the robustness of camera-based remote HR estimation against illumination fluctuations and head motions. The key to robust HR estimation is constructing the histogram of HRs for a considered time window by voting candidate HRs that are estimated using different spatial face patches, spectral modalities (i.e., RGB and NIR), and temporal short-time sub-windows. The histogram voting is performed only for the candidate HRs that pass through a reliability check of HR estimation. The final HR estimate for the considered time window is then obtained by detecting the most frequently voted HR bin and performing parabola fitting using its neighboring bins. By spatially, spectrally, and temporally fusing the candidate HRs for majority voting, our method can automatically exploit suitable video sub-regions less affected by illumination fluctuations and head motions to enable robust HR estimation. Through the experiments on 168 RGB-NIR video recordings, we demonstrate that our fusion-based method achieves improved HR estimation accuracy compared with existing methods.

Algorithm Flowcharts

Publications

- Spatial-Spectral-Temporal Fusion for Remote Heart Rate Estimation [pdf]

Shiika Kado, Yusuke Monno, Kazunori Yoshizaki, Masayuki Tanaka, Masatoshi Okutomi

IEEE Sensors Journal, vol. XX, no. XX, pp. XXXX-XXXX, 20XX (To appear) - Remote Heart Rate Measurement from RGB-NIR Video Based on Spatial and Spectral Face Patch Selection [pdf] [slide]

Shiika Kado, Yusuke Monno, Kenta Moriwaki, Kazunori Yoshizaki, Masayuki Tanaka, Masatoshi Okutomi

International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC2018), pp.5676-5680, July, 2018.